Fabrics Application#

| Group/Version | fabrics.eda.nokia.com/v1 |

| Supported OS | Nokia SR Linux: 24.10.*, 25.3.*, 25.7.*, 25.10.*, 26.3.* Nokia SR OS: 24.10.r4+, 25.3.r2+, 25.7.*, 25.10.*, 26.3.* Arista EOS: 4.33.2f:beta Cisco NX-OS: 10.5.2:alpha |

| Catalog | Nokia/catalog/fabrics |

| Source Code | coming soon |

The Fabric application streamlines the construction and deployment of data center fabrics, suitable for environments ranging from small, single-node edge configurations to large, complex multi-tier and multi-pod networks. It automates crucial network configurations such as IP address assignments, VLAN setups, and both underlay and overlay network protocols.

The application provides the following components:

Fabric topologies#

The Fabric application supports highly flexible deployment models, enabling you to tailor the configuration of your data center fabric to suit different architectural needs. You can deploy a single instance of a Fabric resource to manage the entire data center, incorporating all network nodes, or you can opt to divide your data center into multiple, smaller Fabric instances.

Deployment model example

For example, you might deploy one Fabric instance to manage the superspine and borderleaf layers while deploying separate Fabric instances for each pod within the data center. This modular approach allows for more granular control. This can be taken to the extreme where each layer of a data center fabric could be its own instance of a Fabric. The choice is yours!

A Fabric is a collection of network nodes that are interconnected using Inter-Switch Links or ISLs. Inter-Switch links are point-to-point links, where each endpoint is a node in the fabric. Edge links that have only one endpoint attached to the Fabric are modeled through the Link resource.

Clos fabrics are designed to scale with deployment size, ranging from very small to very large networks.

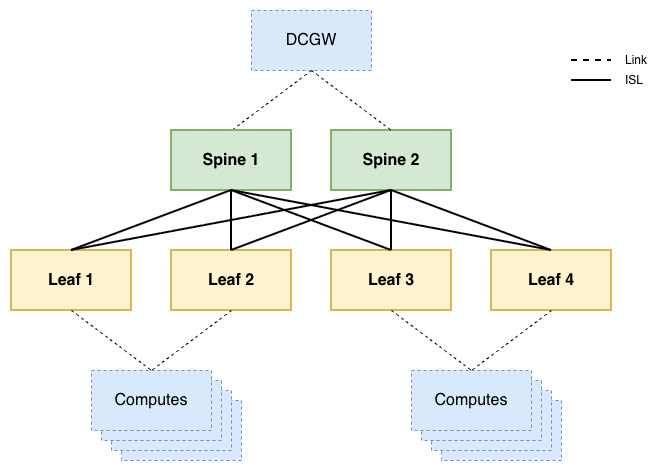

2-tier clos Fabric#

This topology focuses on small to medium deployments with a couple of racks, where leaf1 switches are interconnected through spines2. Leaf switches are often chosen for their port capabilities in terms of speed and connector types, while spine switches are optimized for forwarding capacity.

Typically, computes are attached to Bridge Domains or Routers. To facilitate external connectivity to and from these computes, the reachability information for the IP subnets that are available within the fabric is exchanged with Datacenter Gateway3 (DCGW) routers, using one of two methods:

- PE-CE connection type A: exchange IP-only routes using a routing protocol like OSPF or BGP

- Requires strict separation of IP subnets between datacenter fabrics

- PE-CE connection type B: exchange service routes using Multi-Protocol BGP

- Allows for stretched layer 2 services between fabrics

The requirements of your network determine which type should be used.

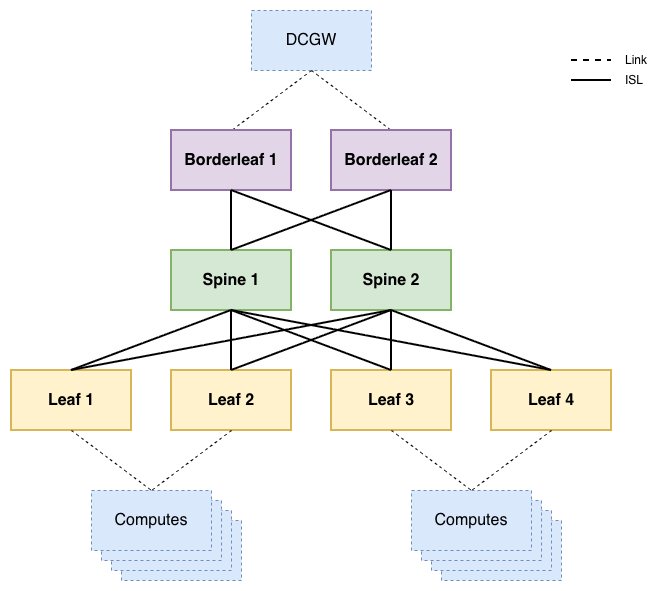

3-tier clos Fabric#

The difference between a 2- and a 3-tier fabric is the addition of borderleafs. The borderleaf is required in the case where the spines don't support service termination. In this scenario, the spines only support IP routing for the VXLAN transport tunnels, and are not aware of the services that run on the fabric. This is a common occurrence in fabrics with >32 leafs (16 racks). Spines don't scale very well horizontally, since every leaf is supposed to be attached to every spine to limit the number of hops between any two computes.

The borderleafs are functionally the same as leafs, but often differ in port speeds: leafs may have 10 Gbps downlinks towards compute servers, whereas borderleafs provide 100 Gbps connectivity to firewalls, DCGWs3, internet gateways, ...

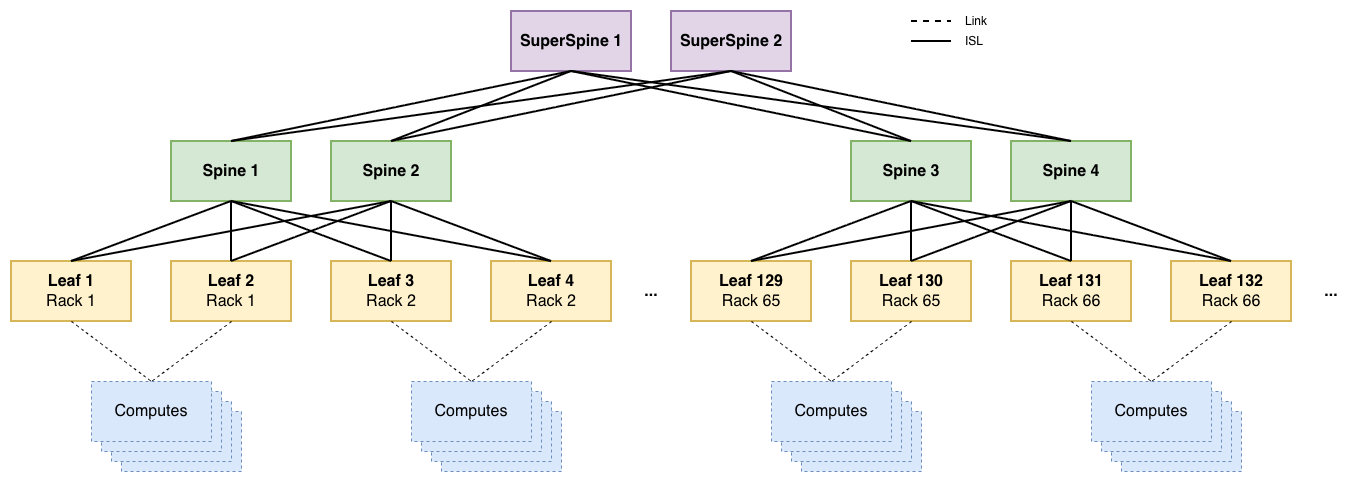

Clos fabric with superspines#

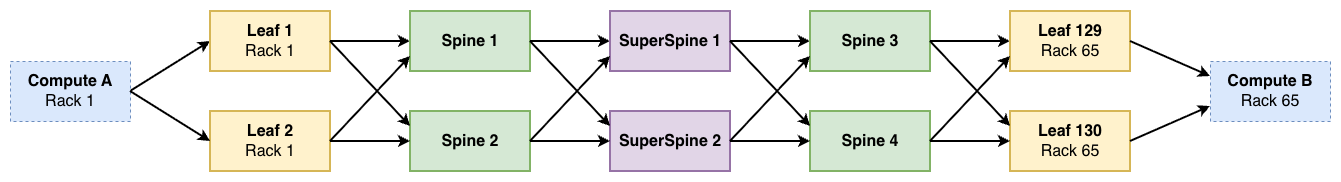

Superspines are introduced in the case where it is no longer feasible to vertically scale the spine nodes, which is typical once the number of leafs exceeds 128 (64 racks). In these hyper-scaled scenarios, an additional hierarchical layer is required.

This scaling level means there are at most 6 hops in between any two computes instead of 4.

-

Leaf switches connect directly to computes in the rack, and are often called top-of-rack switches. Commonly deployed in pairs for redundancy. ↩

-

Spine switches interconnect leaf switches, and often take on the role of route reflectors for the EVPN routes of the entire fabric. Commonly deployed in pairs for redundancy ↩

-

Datacenter Gateways or DCGWs are a generic name for routers that interconnect datacenters with each other and the WAN network ↩↩